This post will cover the investigation into two new segments.

Part 1 - Customer success teams

Part 2 - Large customer support teams

How I moved from UX into CS/CX

After concluding that UX Interview Parsing wasn’t the problem to stay with, I decided to follow up with some leads developed during the pilots. When sharing what I was doing for Product teams, I had several ex-colleagues in the CS space reach out to ask if this solution could help them improve their client relationships. After I closed down the UX project, I felt this could align with my vision of helping SaaS teams better understand their customers and provide a better experience for them.

Part 1 - Customer Success

TLDR; After mapping out the most significant problems and understanding the existing solutions available to Customer Success teams, I realized there was nothing that I could build that would deliver 10x value over what was already known to them in the market today. From this research, people who used Planhat were the happiest, whereas Gainsight has been pushing the most aggressive roadmap.

Approach

To validate the needs of this segment, I conducted a series of user interviews and ran a survey to validate the findings from those interviews. Overall the process took about four weeks to complete.

This project aims to understand the most significant pain points that customer success managers face in trying to conduct their role.

Target Profiles

Initially, I included agencies in the target profile; however, after three interviews with small-medium sized agencies, I realized they are highly averse to paying for tooling, so I omitted them from future interviews.

Interview profiles - Customer Success Managers/Directors/VPs and Revenue Operation managers

Participant criteria: Directly responsible for one or more accounts and are directly measured on client satisfaction.

Sample of organization profiles - Slack, LinkedIn, Qualtrics, Accenture, Cascade, Workvivo, Oliver Wyman

Research Questions (what we want to find out)

What are the top measures of success in their role, and how are they measured/tracked?

What is the primary workflow for attaining this?

What are the most considerable frictions faced when completing this workflow?

What have they done to solve it today?

How frequently does this issue occur?

How much does this problem cost?

Methodology

14 generative user interviews

A mix of JTBD and Mom Test

Survey (41 responses)

Assumptions

Their role is to ensure their clients get the most from their service/product.

A primary measure of this is the client’s satisfaction

Interview Findings

High-level themes

There was a lot of overlap between challenges; however, across the 14 interviews, these six items came up as the top areas of focus.

Customer profiling/categorization

Product Metrics

Predicting Churn

Comms between Sales/CSM

Client action reminders

Account Insights

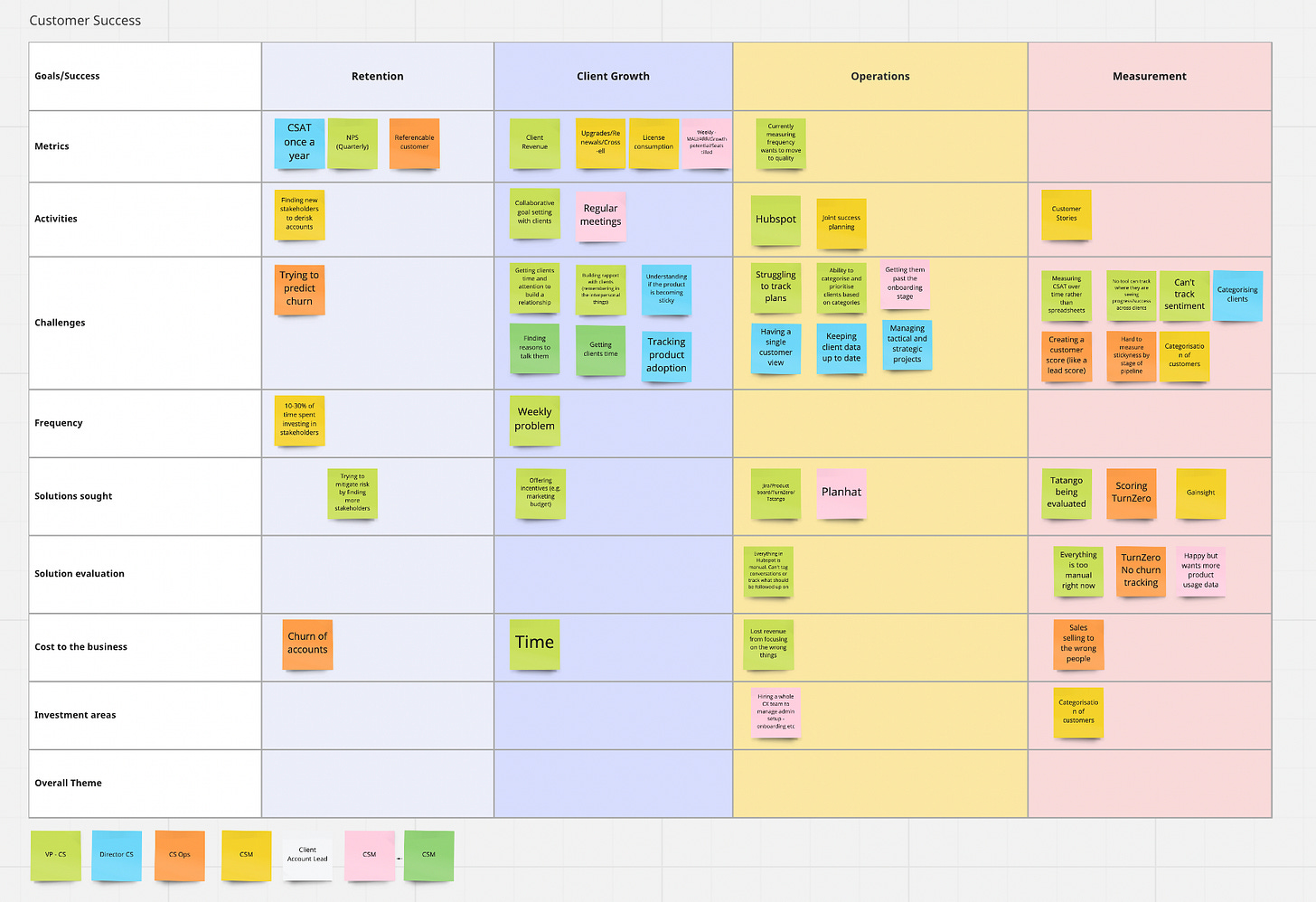

I created the Miro board below to help me categorize where the different action types occurred.

Challenges

Digging deeper into the above themes, I extracted the following challenges participants had.

It takes too much time to keep track of actions and initiatives by the client.

Customer success data (CSAT, NPS, etc.) has too small of a base size to rely on it.

Information (support tickets, notes, LTV, etc.) about clients is too fragmented across the business, and we lack a single view.

Analyzing and categorizing clients to serve their needs better

Predicting churn

Building relationships with more stakeholders in a client account

Improving how projects and time are allocated to clients based on their value to the business

As the themes repeated, I concluded the interviews and mapped them out by category below. Once the repeat issues became clear, I moved to the survey design stage.

Survey Findings

You can access the full report (41 respondents) below.

I would love to hear in the comments what conclusions you arrived at from the survey report?

Conclusion

Based on Questions 9 and 12 of the survey, I concluded that this wasn’t a high enough pain point worth building something new. Combined with my first-hand impressions from interviews, I felt that the offering would need to be substantially different from what already exists to make a dent in this market.

A list of competitors in the space can be found here on G2. From this research, Planhat and Gainsight appeared to be the most promising. Also, from my assessment, I could not see anything that I could develop that would be 10x better than what the current competition was already doing. The final nail in the coffin was that this audience was the most challenging group to get a hold of, which led me to realize that I also did not have a strong enough go-to-market strategy.

Part 2 - Customer Support teams

TLDR; A significant opportunity exists to help teams automate CSAT scoring and root cause analysis. This opportunity is also incredibly feasible from a technology perspective. However, I decided not to pursue the chance as I could not get excited about the work.

Overview

This section will be relatively short as I concluded the research after four interviews. After meeting with Senior Directors at large consulting firms such as Accenture, Dellitote, and PWC, I identified a substantial opportunity in the area of customer support teams.

The key areas that these organizations needed support in were;

Transcript/ticket indexing

Quality Control

Ticket analytics

CSAT measurement

Context

These consulting firms manage large supplier and customer support centers for enterprise-size companies. In some cases, they are hired to manage another vendor who then carries out the operational work.

Challenges

These firms have to pay human resources to perform quality control analysis. In some instances, they are contractually required to hire one quality control specialist for every 15 agents. In addition, they may carry out an in-depth analysis on a sampling of call volume if targets aren’t being met.

Another layer to this challenge was that agents were hitting performance metrics such as Turnaround time (TAT), Service level agreements (SLAs), time to first response, etc. Still, overall they were tanking on Customer Satisfaction Scores (CSAT). These teams had no intelligent way to sample more than 15% of calls without extracting costly consultants.

Let’s talk about CSAT.

Another issue encountered was agents introducing bias to CSAT scores by how they asked a customer to complete it. Today, only about 3-4% of people conduct CSAT surveys, meaning a colossal blind spot to end customer satisfaction.

Conclusion

I believe that there is a massive opportunity for the taking. Back of the napkin, TAM calculations indicate that it is in 40b+. Consulting firms are not experts in software development, so they tend to throw people at the problem rather than technology. Using NLP and Speech Analysis, a relatively accurate model could be developed to extract common issue types and create an inferred CSAT score that achieves a 100% sampling vs. 3-4% today.

This was a weird opportunity to walk away from because it was the most promising one yet, but as I began ideating solutions, I found the work to be a slog. I am not averse to getting excited about unsexy ideas, but for this problem, I asked myself how excited I could get about improving operational support. Unfortunately, the answer was not very.

I want to say a huge thank you to everyone who participated in this research. I’m sorry that I couldn’t find better solutions for you, but hopefully, this research will allow others to succeed where I have not.

This will be the last in my pivot series, for now, I hope. If you have gone through a project and want to share your story, please reach out.